bomonike

Deep Dive tips and tricks to get certified: Step-by-step tutorials, videos, practice exams.

Deep Dive tips and tricks to get certified: Step-by-step tutorials, videos, practice exams.

Overview

- Anthropic the Company

- Competition

- Claude Support

- Claude Products

- Features Glossary

- Productivity: What can you do with Claude?

- Pricing Subscriptiions

- Quizzes

- Tutorials

- Hardware Needed

- Installs

- Claude Desktop Key Shortcuts

- / slash commands

- How Claude Code Works

- Custom Slash Commands

- Claude Folders and Files

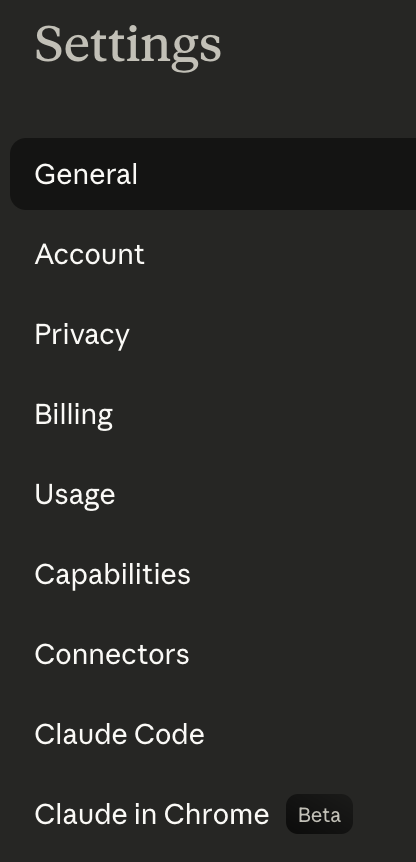

- Settings menu and keyboard shortcuts

- Tengu UI Customizations

- @DESIGN.md Design System

- Agents

- Hooks

- .gitignore

- Permissions

- Python Project

- Python Prompt examples:

- Settings config

- /cost tokens spent

- Skills

- Rules

- Vulnerability Scanning

- Create an iPhone app

- Claude Partner Network

- Certifications

- Claude Certified Architect (CCA), Foundations

- Models

- Advanced reasoning:

- Common coding tasks:

- Quick code completions and suggestions:

- Chat API call using Claude Opus

- AI Fluency Class

- Text Chat using Claude API

- External Tool Use

- Plugins

- MCP

- Tools

- Run in Containers

NOTE: Content here are my personal opinions, and not intended to represent any employer (past or present). “PROTIP:” here highlight information I haven’t seen elsewhere on the internet because it is hard-won, little-know but significant facts based on my personal research and experience.

This article was hand-crafted based on AI responses.

Anthropic the Company

- Anthropic was founded in 2021 by seven former employees from OpenAI, including now CEO Dario Amodei was OpenAI’s Vice President of Research. Key personnel now:

- Boris Cherny, Engineering

- Katelyn Lesse, Engineering

- Cat Wu, Product

- Angela Jiang, Product

-

Visit https://anthropic.com/ - the corporate marketing landing page.

It says “Anthropic is a public benefit corporation dedicated to securing its benefits and mitigating its risks.”

-

Anthropic’s entry on LinkedIn classifies the company in the “Research Services” industry:

“Anthropic is an AI safety and research company working to build reliable, interpretable, and steerable AI systems.” 3M followers. 501-1K employees.

-

On Glassdoor.com, 86% of Anthropic employees would recommend to a friend, which is high praise indeed.

-

Click “Read more” at https://www.anthropic.com/research about results from Anthropic’s survey of users.

- Click “Posts” tab to view announcements.

- Click “Ads” to see videos of 2026 Superbowl commercials.

-

- Article by Gigi Sayfan CCDD (Claude Code Deep Dive)

-

“Claude” on LinkedIn.com says “Claude is an AI assistant built by Anthropic to be safe, accurate, and secure.” in Technology, Information and Internet. 884K followers.

PROTIP: Claude is named for Claude Shannon at Bell Labs, who founded “informational theory of communication” which made AI possible.

VIDEO: “Brainstorm in Claude, build in Cowork”

Competition

Claude competes with agentic coding tools (aka coding agent IDEs) that read a codebase, edit files, and run commands:

- Amazon’s Kiro CLI & IDE for spec-driven development. But it needs to be constantly connected to AWS eating up credits.

- warp.dev (which does a great job of detecting coding and CLI errors and suggesting fixes), now open source

- OpenAI’s Codex VIDEO

- OpenCode

- Perplexity

- Google Gemini Gemma & Antigravity IDE

- Mistral AI

- Devin by Cognition (merged)

- Temporal’s Pydantic

- OpenCode

https://www.tbench.ai/leaderboard (Terminal Bench Leaderboard) provides benchmarks AI agents’ terminal mastery operating the harbor framework.

Claude Support

REMEMBER: BLAH: Anthropic doesn’t offer phone or live chat support, only thru chat at support.claude.com.

Claude Products

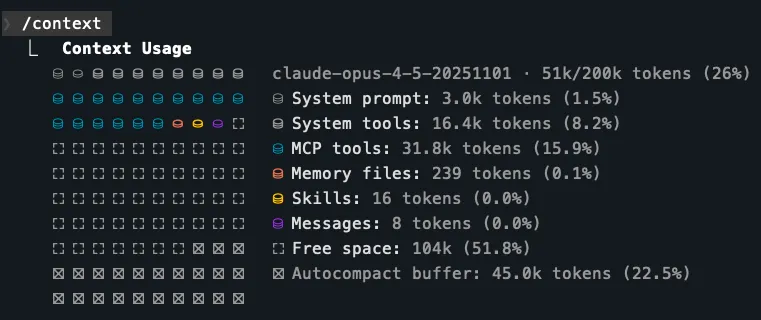

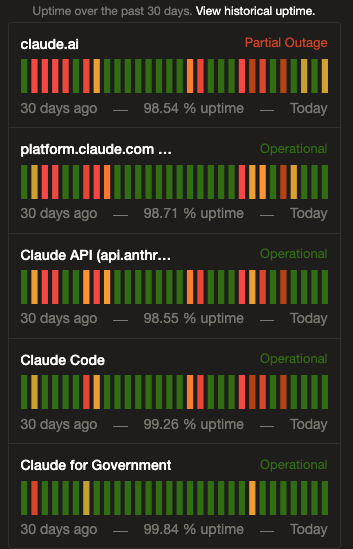

Uptime shows Anthropic’s own production environments:

Uptime shows Anthropic’s own production environments:

-

claude.ai website reached on internet browser.

Meet Claude - Platform - Solutions - Pricing - Resources - Contact sales - Try Claude

-

platform.claude.com is the user Claude Console Dashboard, Workbench, Files, and Skills, Documentation (for each organization). Claude also creates the evaluation automation that it runs. “You Guide To Local AI - Hardware, Setup and Models”

-

Claude API refers to the endpoint listening for SDK requests from procedural programming code or via the claude-agent-sdk wrapper around claude -p commands

REMEMBER: The -p flag specifies non-interactive (aka “headless” task), No prompts, no confirmations. Runs and returns the result. The SDK spawns the Claude Code CLI as a subprocess and communicates over stdin/stdout via JSON-lines. xcompare it to the Anthropic Client SDK. Specify –allowedTools and –disallowedTools permissions.

-

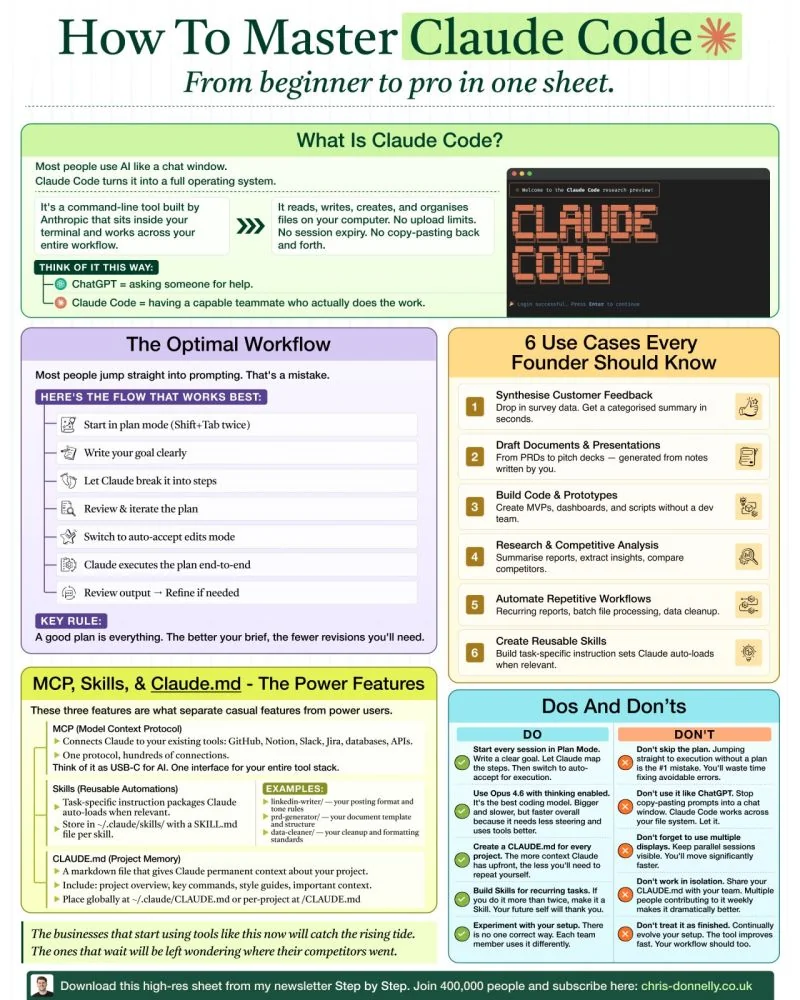

Claude Code is “like talking to a capable teammate who actually does the work”. Instead of hand coding, human app designers now speak natural language conversations with Claude Code to write design specs from which both infrastructure creation and programming code are generated.

“AI will soon be writing 90 percent of all code.” — Dario Amodei, Anthropic CEO, March 10 2025

That is why instead of human employees, companies will be paying for AI tokens to do work. That’s the basis for high valuations and unpresedented investments in gigantic data centers using AI chips.

-

Claude CoWork can interact with you computer’s files, mouse, keyboard, and screen, to operate any app. VIDEO

-

The history of the US Government’s use of Claude for domestic surveillance or in fully autonomous weapons is summarized in https://en.wikipedia.org/wiki/Anthropic

It says the company is headquartered in San Francisco’s Foundry Square (near the Bay Bridge) at 500 Howard and First Streets (across from Chipotle & BlackRock and close to the SalesForce tower’s BART/busses).

REMEMBER: Anththropic does not host their own models but use AWS, Azure, GCP, etc. Claude is the only frontier AI model available on all three leading cloud providers: AWS, Google Cloud, and Microsoft. Claude would also be integrated into the Databricks Data Intelligence Platform and Snowflake’s Lakehouse databases.

PROTIP: That enables us to bring costs down by using a downloaded local foundation model while using Claude Code/Work.

-

Glasswing secures software using the Mythos frontier model built using NVIDIA’s GP3 chips.

-

Claude Dispatch enables cross-device workflows where tasks move from mobile app to desktop app which stays awake (doing whatever else).

-

“Memory on Claude Managed Agents” enables agents to learn from past sessions and share what they’ve learned with other agents. The memories mounts directly onto a filesystem so developers can keep control over what these agents retain - the same bash and code execution capabilities that make it effective at agentic tasks. “With filesystem-based memory, our latest models save more comprehensive, well-organized memories and are more discerning about what to remember for a given task.”

-

Claude “Computer Use”: Computer Use utilizes the capabilities of the latest models including image reasoning and tool use to enable an LLM-based agent to use a computer. Like a human user, the model processes an image of the screen, analyzes it to understand what’s going on, and navigates the computer by issuing mouse clicks and generating keyboard strokes to get things done.

VIDEO: Fun fact: 90% of code in Claude Code is written by itself, in TypeScript, Yoga embeddable layout engine (to determine the size and position of boxes for), React, Ink (React-like library for building interactive CLI apps in JS), and BUN toolkit (acquired by Anthropic in ‘25).

VIDEO: Although Temporal is used on Claude, the <a target=’_blank” href=”https://ccunpacked.dev”>leak</a> revealed that Claude is vulnerable to the in remote access trojan from Axios 1.14.1 npm. Get rid of the vulnerability

The team works at around 5 releases per engineer each day. AI agents are used for code reviews and tests, test-driven development’s (TDD) renaissance, automating incident response, and cautious use of feature flags. “Inside Claude Code: The Architecture of AI Agents” by PY is a while loop.

Tool selection: Because raw GUI control is powerful, but also brittle, slower, and much harder to govern, the Claude ecosystem is a layered agent system where connectors (with structured contracts) via MCP apps are preferred, browser automation (of forms on websites) is secondary, and raw full-screen (difficult to govern) desktop control is the fallback layer.

References:

- https://newsletter.pragmaticengineer.com/p/how-claude-code-is-built

Features Glossary

Automation provided by AI agents have gone beyond auto-complete of code.

-

Connectors (under the “Customize” and Settings menu items) enable Claude to interact with external platforms GitHub, Gmail, Google Calendar, Google Drive, etc.

-

GitHub Integration: Deep integration with GitHub for PR reviews, issue management and even CI/CD.

-

An agentic code harness is what enables an LLM to be Agentic with sandboxes, accept prompts, use tools, etc.

-

Memory system: CLAUDE.md and other files that provide persistent context across sessions.

-

Slash commands: Powerful keywords to control agent behavior. VIDEO

-

Skills (under the Customize menu item) enable new knowledge to be dynamically obtained by Claude or subagents based on minimal description and the current query as opposed to always taking up room lurking in the context memory. Skills are now integrated with commands.

-

Subagents: Create specialized subagents for different tasks with their own context window. REMEMBER: Subagents operate with isolated context and do NOT share memory with the coordinator. Every piece of its information must be passed explicitly in it.

-

MCP Support: Extend it with any MCP tool to access APIs, databases and other external systems.

-

<href=”#Tools”>MCP Tools</a> defines what MCP clients should run to take action.

-

Hooks are small Python and/or Bash shell scripts (agentic workflows) that run when triggered by events: “PreToolUse” (after Read) or “PostToolUse” (after Write or Edit). REMEMBER: A hook can also block Claude from taking an action unless a specific condition has been met.

-

Plugins (under the Customize menu item) “packaged feature bundles” may include (bundle) hooks, slash commands, and skills together for sharing with others. The “plugins” folder contains a blocklist.json file, a “known_marketplaces.json” file and the marketplaces folder, starting with “claude-plugins-official”.

-

Claude Agent SDK are used to build agentic AI systems beyond coding assistance.

-

“Constitutional AI” is a training approach developed by Anthropic to help AI models self-evaluate and revise their own responses, based on a predefined set of ethical guidelines and principles (harmless, honest, etc.) rather than RLHF (Reinforcement Learning with Human Feedback). “intent classification” in Claude’s safety system

Productivity: What can you do with Claude?

Target one job that has these three qualities:

-

It wastes real time

-

It happens often

-

It has multiple steps

Good first examples with a small test group:

-

turn meeting notes into action items

-

research a list of companies and make a short brief

-

respomd to support emails with draft replies

-

pull weekly numbers and write a simple report

-

check a few sources and summarize what changed

PROTIP: Improvements in net productivity can be confidently monitized when features are combined to be useful when consistently applied:

-

Customer Support Resolution Agent (Agent SDK + MCP + escalation)

-

Code Generation with Claude Code (CLAUDE.md + plan mode + slash commands)

-

Multi-Agent Research System (coordinator-subagent orchestration)

-

Developer Productivity Tools (built-in tools + MCP servers) See https://github.com/anthropics/courses/blob/master/tool_use/README.md

-

Claude Code for CI/CD (non-interactive pipelines + structured output)

-

Structured Data Extraction (JSON schemas + tool_use + validation loops)

CAUTION: Cowork activity is not captured in audit logs or Compliance APIs today, which is why it is not for regulated workloads.

“5 ‘Boring’ AI Workflows that Businesses Actually Want (And How to Sell them)” by Nate Herk of AI Automation

Pricing Subscriptiions

PROTIP: Use merlin.ai’s bulk purchasing costs $5/mo ($60/year) (with code AZ5) to access several LLMs (Claude Sonnet 4.5, OpenAI GPT5, etc.) instead of paying for a Claude AI subscription at https://claude.com/pricing:

- Claude Free

- Claude Max $17/month to use Claude Code and Cowork

- Claude Max $100/month for 5x or 20x more usage than Pro

- Teams $20/month

- Enterprise

CAUTION: In April 2026 Anthropic removed the $17/month tier and requires the $100/month for use of Claude Code.

CAUTION: Rate tokens chargess for the same request prompt is not consistent over time.

CAUTION: Claude’s token charges are more complicated that figuring out your taxes.

Quizzes

Tutorials

Anthropic’s own tutorials are at:

- https://anthropic.skilljar.com

- https://github.com/anthropics/courses

- Anthropic is trainging the country of Iceland

On Coursera, Stephen Grider of Anthropic built

- Claude Code in Action

- Building with the Claude API

- Introduction to Model Context Protocol

- Model Context Protocol: Advanced Topics

Intro:

- I Took All 7 Anthropic Courses in One Weekend (Honest Review) by Jas Wong

-

12 hour “Claude Code Essentials” exam released by Andrew and Gunnar Grosch referencing github.com/enthropics on March 20, 2026 via freeCodeCamp.org to plug $34 ExamPro study materials to pass ExamPro.co’s own “EXP-CLAUDECODE-01”.

- Local AI Agents In 26 Minutes by Tina Huang

- 3-hr Practical Guide by Academind by Maximilian Schwarzmüller

Articles:

- Teaching Claude Code How You Work: CLAUDE.md in Practice

- From Zero to Agentic Coding: Running Claude Code with Amazon Bedrock

YouTube videos with no subscription:

- VIDEO: “Claude Isn’t Safe. This Anthropic Whistleblower Has the Proof.” by Novara Media quoting Mrinank Sharma’s resignation letter.

- “The Ultimate Beginner’s Guide To Claude” by AI Edge on Telegram

- “Claude Code - Full Tutorial for Beginners” by Tech With Tim offering newsletter

- https://www.youtube.com/watch?v=aWAfpOi91vc&pp=ugUEEgJlbg%3D%3D”>”Let Claude Cowork Work For You, here’s how”</a>

- https://www.youtube.com/watch?v=rSoeh6K5Fqo”>”Making Claude Code more useful with TDD and XP Techniques”</a> by FeedbackDrivenDev

- “12 Hidden Settings To Enable In Your Claude Code Setup” by AI LABS

- “You Can Build The Craziest Things with Claudes Agent SDK” by Traversy Media

YouTube videos peddling subscriptions:

- 12 hour “Claude Code Essentials” exam released by Andrew and Gunnar Grosch referencing github.com/enthropics on March 20, 2026 via freeCodeCamp.org to plug $34 ExamPro study materials to pass ExamPro.co’s own “EXP-CLAUDECODE-01”.

- YouTube by Mark Kashef pushes $64/mo Early AI-dopters

- “Claude Computer Use Just Dropped, Here’s How to Hack It” (Use the Min browsser to avoid blocking) to plug $184/mo Maker School

- “How to Build Claude Agent Teams Better Than 99% of People” by Nate Herk - AI Automation of $99/mo AI Automation Society Plus

by Brock Mesarich - AI for Non Techies to pitch $47/mo AI for Non-Technies: “Dispatch” from your phone.

- “Claude’s Biggest Update Just Dropped… (Computer Use)”

-

“How to Use Claude Cowork Projects Better Than 99% of People”

- “Anthropic’s SECRET Model Just Leaked (INSANE)” pushing $99/yr ShippingSkool

- “Anthropic’s NEW Claude Architect Guide In 39 Minutes” by Mark Kashef to pitch $64/mo Early AI-dopters

- “The Easiest Way to Get Ahead With Claude Code” by Simon Scrapes pushing $37/mo Scrapes

- “Claude’s New AI Auto-Mode Runs Itself Now” by AI News Today - Julian Goldie Podcast” to plug $59/mo AI Profit Boardbroom

- “Claude Explained - Chat vs Cowork vs Code” by Oliur Online to plug free resources and $179/yr Digital Creator Club

- “Claude Code Just Got 10X Powerful (10 Insane Features) by The AI Growth Lab with Tom to push $500 one-time “30 day Challenge”

- “Build & Sell with Claude Code (10+ Hour Course)” by Nate Herk pushing $99/mo AI Automation Society Plus

- https://www.youtube.com/watch?v=UPtmKh1vMN8”>”CLAUDE CODE ADVANCED: Everything They Don’t Teach You”</a> by <a target-“_blank” href=”https://nicksaraev.com/”>Nick Saraev</a> pushing $184/mo Maker School 2100.

- “5 Open Source Repos That Make Claude Code UNSTOPPABLE (March 2026)” by Chase AI $97/mo Chase AI+ 837.

- AutoResearch - https://github.com/karpathy/autoresearch

- OpenSpace - https://github.com/HKUDS/OpenSpace

- CLI-Anything - https://github.com/HKUDS/CLI-Anything

- Claude Peers MCP - https://github.com/louislva/claude-peers-mcp

- Google Workspace CLI - https://github.com/googleworkspace/cli

Others when you’re through with the above:

https://www.youtube.com/watch?v=uUGfo8QOsW0&pp=ugUEEgJlbg%3D%3D Claude Mythos 5: Most Powerful Model Ever! AGI, GLM 5.1, Claude Code Update & Codex Plugins! AI NEWS

Hardware Needed

- If you need to buy a machine, consider that Mac Mini’s have good resale value and value on mid-tier vs. PC server with NVIDIA GPU. Apple does overcharge for memory. The Apple M3 Max has more bandwidth than the newer M4 Pro.

- Buy two 2+ TB USB drives for backup. One to keep plugged in and another for daily full backups you leave in a faraday bag.

QUESTION: Can a Chromebook (with no large RAM or hard drive) be used?

Installs

- To enable installation of utility packages on macOS, install the Homebrew package manager for macOS, from any folder:

/bin/bash -c "$(curl -fsSL https://raw.githubusercontent.com/Homebrew/install/HEAD/install.sh)"NOTE: It makes use of Ruby that comes with all macOS.

- To freely open apps from any folder, add the two app paths to .bash_profile:

/Applications;~/Applications - Optionally, install an alternative to macOS native Terminal app:

brew install --cask kitty open -a kittyPROTIP: 3rd-party Terminal apps Kitt and Ghostly natively support notification events without additional configuration (which iTerm2 does).

My Claude Code Template

PROTIP: Load my templates repo from GitHub, which contains a curated set from other tutorials.

- In your OS Terminal app, create a “bomonike” folder.

mkdir -p ~/bomonike - In your OS Terminal app, clone just the main branch:

git clone https://github.com/bomonike/claude-templates.git --depth 1 cd claude-templatesPROTIP: Use this as your base project when you install Claude.

aliases.sh

alias cl='claude --dangerously-skip-permissions' alias clc='cl --continue' # resume last session with the context/history from the previoius session # Resume Claude with the context/history from the previoius session but still be able to get back to that point later: alias clf='claude --resume --fork session'.Visual Studio Code Install

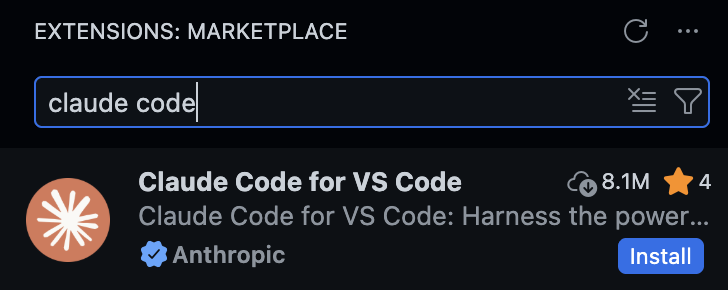

- Install Homebrew (which is based on Ruby).

- Install VSCode and start it:

brew install --cask visual-studio-code code - Click the Extensions and enter “Claude Code” in the Marketplace

-

Click “Install” to the one from “Anthropic” (marked with a blue star).

Ollama Install

To Run LLMs locally on your machine off the cloud, install Ollama (for more privacy). On a Mac Mini:

- Install Ollama: VIDEO, BLOG:

brew install ollamaThat installs folders that need to be removed to fully uninstall:

- ~/.ollama to hold models and configuration. Under that, ~/.ollama/logs contains logs.

- ”~/Library/Application Support/Ollama”

- ”~/Library/Saved Application State/com.electron.ollama.savedState”

- ~/Library/Caches/com.electron.ollama/

- ~/Library/Caches/ollama

- ~/Library/WebKit/com.electron.ollama

- Get an Ollama account and API key:

- https://ollama.com

- Click “Sign in”. This creates a client_id in the URL.

- Click “Sign Up”. Type your email address. Continue.

- Create a password using your secrets manager and switch to paste it.

- Switch to your email client and copy the code.

- Copy the code and switch back to the Ollama.com to paste it.

- Click “Sign in”. Type your email and click Continue.

- Copy your password from your secrets manager and switch to paste it.

- At https://ollama.com/pricing notice the “Free” account enables you to “Run models on your hardware” and the paid subscriptions enable you to run their cloud models. “Add API Key”.

- Type a key name (using a date such as 261231). Click “Generate API key”.

- Click the copy icon to copy the API key and paste it in your secrets manager.

- Optionally, create an add your asymmetric key which starts with “ssh-ed25519 AAA”.

- At https://ollama.com/settings click “Create API key” to run models on their cloud.

- In your file, construct the variable by pasting the password copied from your secret manager:

OLLAMA_API_KEY=your_api_key - Sign into Ollama CLI:

ollama signin -

In the window that opened automatically to https://signin.ollama.com/… on your default browser app, type your email and password to sign in to your paid account.

Setup auth for free use of moonshot.ai’s Kimi model downloaded for running on Ollama via local relay path.

Ollama model selection

- At https://ollama.com/search select a cloud model and copy its model id listed to paste in a variable. For example:

#MY_MODEL_ID="kimi-k2.5:cloud" MY_MODEL_ID="gpt-oss:120b-cloud" ollama show "$MY_MODEL_ID"For example, “context length” is 131072

Model architecture gptoss parameters 116829156672 context length 131072 embedding length 2880 quantization MXFP4 Capabilities completion tools thinkingmodel ID context

lengthembedding

lengthVRAM deepseek-v3.1:671b-cloud 163840 7168 ~128 GB deepseek-r1:14b 131072 5120 ? gpt-oss:20b-cloud 131072 2880 ? gpt-oss:120b-cloud 131072 2880 ? kimi-k2:1t-cloud 262144 2048 ? qwen3-coder:480b-cloud 262144 2048 ? Multiply the two numbers to calculate the KV cache size

≈ embedding_length × context_length × 2 × num_layers × bytes_per_elementSince there are 2 bytes per element for fp16, VRAM for deepseek-r1:14b:

= 131072 × 5120 × 2 × 48 × 2 (fp16) ≈ ~128 GB (full context, fp16)AirLLM?

DEFINITION: The embedding length is the size of each vector representing each token internally. It is larger for more complex models. The embedding length needs to match the “dimensions=” spec to define a vector DB used to create RAG pipelines for semantic search.

-

Calculate OLLAMA_CONTEXT_LENGTH:

DEFINITION: DOCS: The OLLAMA_CONTEXT_LENGTH specification is the context window size (in tokens) of how much text the model can “see” at once during inference:

Use Case Range Default if unset 2048 Chat / Q&A 2048 – 4096 Document summarization 8192 – 32768 Long-form coding/analysis 16384 – 65536 - Pull the model down to your machine:

ollama pull "$MY_MODEL_ID" # downloadStart Ollama

- To run Ollama in the foreground, start the service using the memory specification:

OLLAMA_CONTEXT_LENGTH=64000 OLLAMA_FLASH_ATTENTION="1" OLLAMA_KV_CACHE_TYPE="q8_0" /opt/homebrew/opt/ollama/bin/ollama serveAlternately, to have ollama start/restart on every login always running in the background (taking up RAM):

brew services start ollama - While running, identify the CONTEXT memory being used:

ollama psFor example:

NAME ID SIZE PROCESSOR CONTEXT UNTIL gemma3:latest a2af6cc3eb7f 6.6 GB 100% GPU 65536 2 minutes from now

Anthropic API Key

- To tell Claude to use Ollama locally instead: VIDEO:

export ANTHROPIC_API_KEY="" export ANTHROPIC_BASE_URL=http://localhost:11434 export ANTHROPIC_AUTH_TOKEN=ollamaClaude Desktop app Install

- Install pre-requisite utilties NodeJs:

brew install node winget install OpenJS.NodeJS.LTS # on Windowsnode --versionv20.18.0

- Click “Download desktop app” (claude.dmg to install on macOS) https://claude.ai or https://claude.com/download,

open your Terminal app and run:curl -fsSL https://claude.ai/install.sh | bashPROTIP: We do not recommend “brew install” because it can be out of date, even though it’s more convenient since Homebrew installs to /opt/homebrew/bin for all apps.

- Edit your ~/.bashrc and .zshrc file to ensure that the program will be first in the OS $PATH folder by adding this at the bottom of the file:

echo 'export PATH="$HOME/.local/bin:$PATH"' >> ~/.bashrc && source ~/.bashrc - Confirm installation location:

whereis claudeClaude was not installed if you see: bash: claude:: command not found Otherwise you should see this (where ~ is replaced with /Users/your machine username):

claude: ~/.local/bin/claude

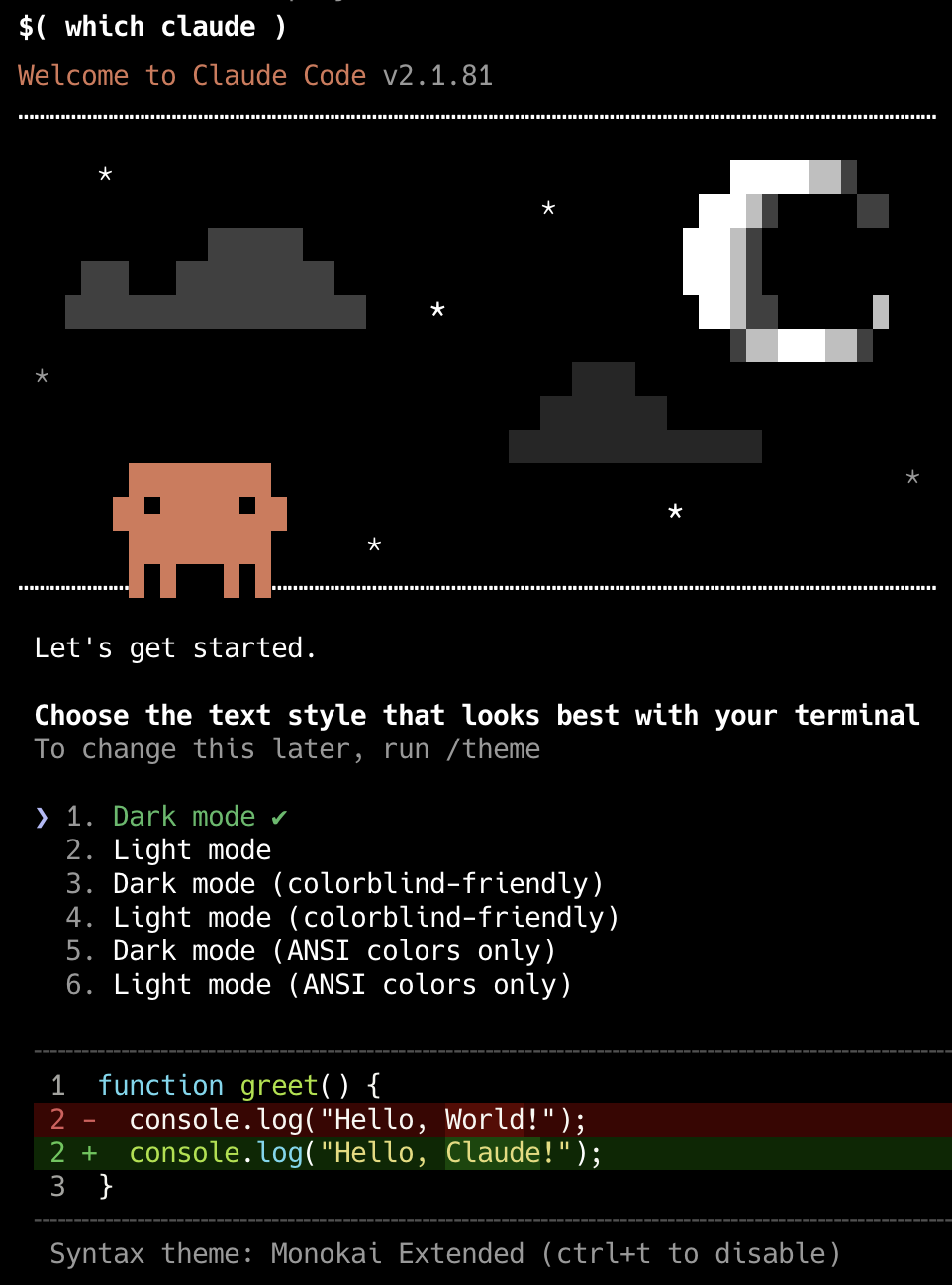

- Open the claude app:

$( whereis claude)That’s the equivalent of:

~/.local/bin/claudeAlternately, more simply since the path is within $PATH:

claudeAlternately: To begin Claude with the context/history from the previoius session:

claude --resumeAlternately, to begin Claude with the context/history from the previoius session but still be able to get back to that point later:

claude --resume --fork sessionRemember that aliases were setup.

crfREMEMBER: At the user root folder there is a .claude.json file containing settings for GrowthBook.io, a popular open-source platform for feature flagging and experimentation. named “tengu”.

- Expand your Terminal window (drag a side wider or press command+shift+minus) before listing parameters:

claude -?Usage: open [-e] [-t] [-f] [-W] [-R] [-n] [-g] [-h] [-s

][-b ] [-a ] [-u URL] [filenames] [--args arguments] Help: Open opens files from a shell. By default, opens each file using the default application for that file. If the file is in the form of a URL, the file will be opened as a URL. Options: -a Opens with the specified application. --arch ARCH Open with the given cpu architecture type and subtype. -b Opens with the specified application bundle identifier. -e Opens with TextEdit. -t Opens with default text editor. -f Reads input from standard input and opens with TextEdit. -F --fresh Launches the app fresh, that is, without restoring windows. Saved persistent state is lost, excluding Untitled documents. -R, --reveal Selects in the Finder instead of opening. -W, --wait-apps Blocks until the used applications are closed (even if they were already running). --args All remaining arguments are passed in argv to the application's main() function instead of opened. -n, --new Open a new instance of the application even if one is already running. -j, --hide Launches the app hidden. -g, --background Does not bring the application to the foreground. -h, --header Searches header file locations for headers matching the given filenames, and opens them. -s For -h, the SDK to use; if supplied, only SDKs whose names contain the argument value are searched. Otherwise the highest versioned SDK in each platform is used. -u, --url URL Open this URL, even if it matches exactly a filepath -i, --stdin PATH Launches the application with stdin connected to PATH; defaults to /dev/null -o, --stdout PATH Launches the application with /dev/stdout connected to PATH; --stderr PATH Launches the application with /dev/stderr connected to PATH to --env VAR Add an enviroment variable to the launched process, where VAR is formatted AAA=foo or just AAA for a null string value. </pre> - Confirm installation success:

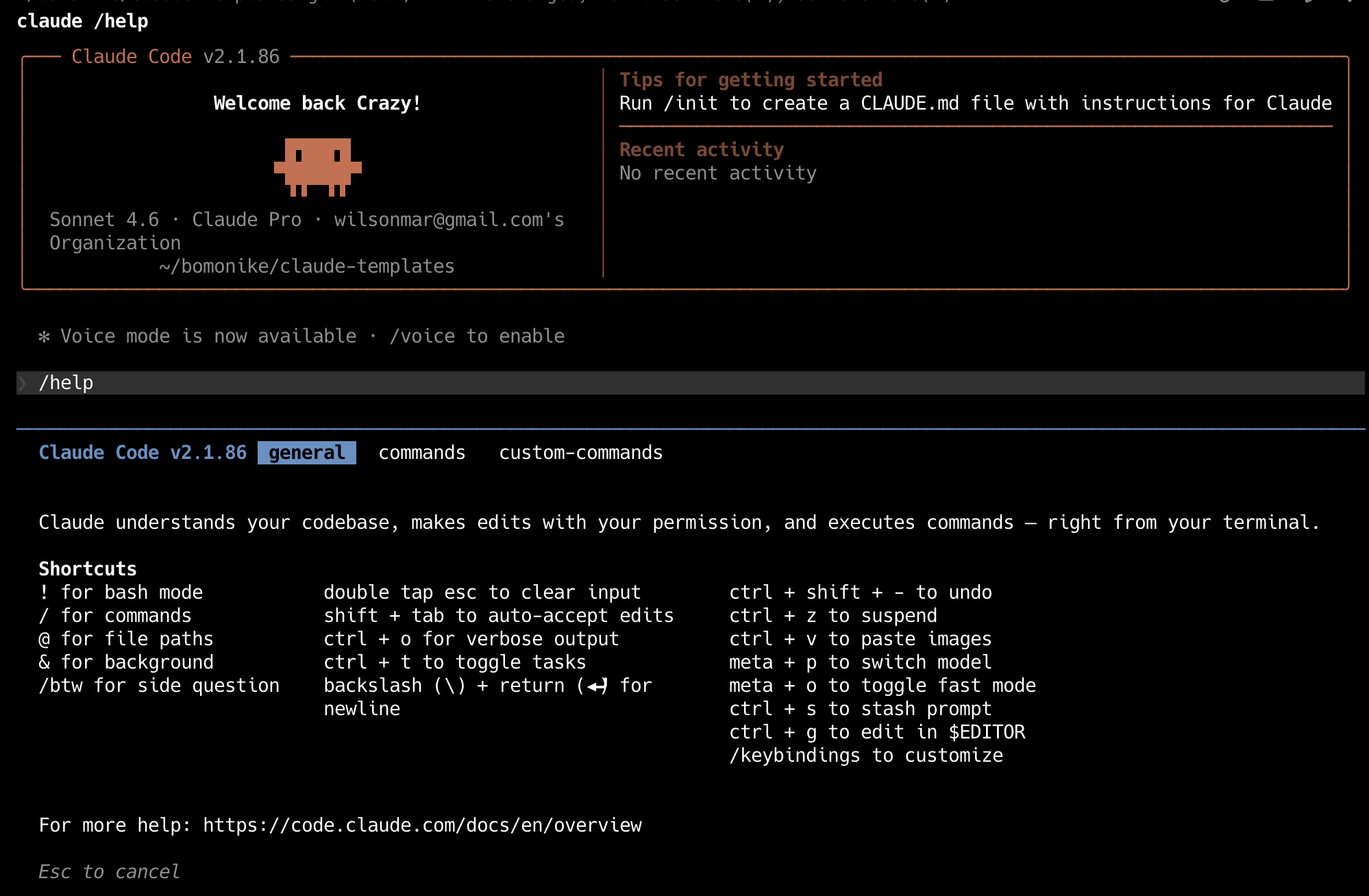

claude --versionThis should reflect the latest release at https://github.com/anthropics/claude-code/release which was, at time of this writing:

2.1.86 (Claude Code)

PROTIP: Notice that Claude is updated daily. So end your day with a backup and start your day with an update.

Start Claude

REMEMBER: You can specify what model (LLM) to use when you start Claude.

- PROTIP: In a CLI, define your model id variable such as:

MY_MODEL_ID="deepseek-v4-pro:cloud"CAUTION: Do not insert spaces to the left/right of “=” such CLI commands.

Using a variable enables you to copy commands below and switch tot he CLI to paste them (with command+V):

- Chat with the model like Google & ChatGPT Question & Answer:

ollama run "$MY_MODEL_ID" - To use DeepSeek-V4-Pro with Claude Code, run:

ollama launch claude --model "$MY_MODEL_ID" - For use with OpenClaw:

ollama launch openclaw --model "$MY_MODEL_ID" - For use with Hermes Agent:

ollama launch hermes --model "$MY_MODEL_ID"### /login = First-time Authentication

-

The first time that Claude runs:

???

- Continue to browser.

- Claude Code would like to connect to your Claude chat account

- Click “Authorize”.

- Press command+W to close the browser window.

- Click “Copy Code”. Press command+tab to switch to VSCode.

- Click on the entry until the orange border appears.

-

Press command+V to paste. Click “Authorize”.

PROTIP: Press shift+command and - or + to make fonts larger or smaller. But that adjusts for all panes. So many prefer to view Claude Code standalone rather than within VSCode.

PROTIP: Ideally, use three monitor screens: Terminal for Claude Code, Visual Studio (vertical view), Tutorial screen.

- Check Authentication status:

claude auth status - To disable Authentication:

claude auth logoutREMEMBER: Logout auth before setting up auth for 3rd-party clouds (Amazon, GCP, Microsoft, etc.)

From Google VertexAI after installing gcloud cli:

export ANTHROPIC_???_API_KEY="..." export CLAUDE_CODE_USE_???=1From Micrsoft Foundry Project API Key:

export ANTHROPIC_FOUNDRY_API_KEY="..." export CLAUDE_CODE_USE_FOUNDRY=1Model Cost Comparison

- Consider the model to use:

MY_MODEL="kimi-k2.5:cloud" ollama pull "$MY_MODEL" # on Claude's cloud (AWS) OLLAMA_CONTEXT_LENGTH=64000 ollama serve claude --model "$MY_MODEL"CAUTION: “cloud” in the model ID means access in the cloud and loss of privacy.

The model features a 1T-parameter Mixture-of-Experts (MoE) Transformer architecture with 32B activated parameters. It supports image, video, PDF, and text inputs up to 256K tokens and excels in benchmarks like MMMU-Pro (78.5), SWE-Bench Verified (76.8), and AIME 2025 (96.1). Trained on approximately 15 trillion mixed visual and text tokens, it enables native multimodality, cross-modal reasoning, and efficient tool use grounded in visual data.

Using a free model means that you can use automatic /loop to iterate through many results, then select the best, like a Monte Carlo simulation.

- Using the “DeepSeek V4 Flash (high)” (from China) yields 75% of performance at 1% of the cost.

- Using the “DeepSeek V4 Pro” is the best price/performance

WARNING: models from China (Kimi, DeepSeek, etc.) was created (stolen) by (adversarial) distillation of Anthropic’s models. Michael Kratsios @mkratsios47

- The LM Studio GUI using the MLX backend can produce 20 to 30 percent faster generation for the same model on the same hardware.

VIDEO: Instead of “Download” at

https://lmstudio.ai/blog/claudecodebrew install --cask lm-studio

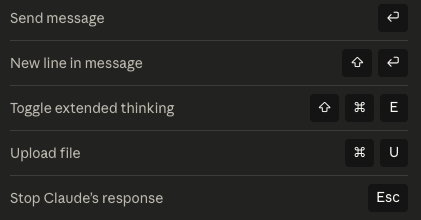

Claude Desktop Key Shortcuts

PROTIP: Instead of moving your mouse and clicking the icons, it’s faster to hold down the command key and press the key indicated.

QUESTION: How to get shortcut keys for other menu items?

/ slash commands

- Type just the / slash character for a menu:

/init /batch # orchestrates large-scale changes across your entire codebase — decomposing work into 5 to 30 independent units, presenting a plan for approval, then spawning one background agent per unit in an isolated git worktree. /claude-api # loads Claude API reference material for your project's language. These are like bundled skills but built-in. /compact # summarize the conversation and replaces the current context with the summary. /context # token usage by each system component /debug # Shows config loading details and full context composition. /extra-usage /heapdump /loop /pr-comments /release-notes /review /security-review /simplify /update-config /schedule

Others:

/help # menu below: /connect # establish connection /start # Begin a new session /terminal-setup # ??? /memory # /statusline # below the prompt defined in customizable ~/.claude/statusline.sh /settings # menu /clear # (aka /reset) is faster than exiting and starting Claude Code again. /search # through the database /upload # files exit # from Claude UI/CLI program

/cost# Now at tokens spent in /usage/stats# Now at tokens spent in /usage /status # overview of your current Claude Code setup /config # configuration /loop /doctor/help for Shortcuts

REMEMBER: Just as within Jupyter Notebook, run shell commands prefixed with the ! modifier. For example, ! pwd will run the pwd command and insert the output right into the conversation.

/model switch

/model default # to switch to the sonnet model /model haiku # to switch to using the latest Haiku model. /model Sonnet (1M context) # to switch to using the latest Opus model. /model Opus (1M context) # to switch to using the latest Opus model. /model mythos # new Capybarra March 28, 2026 to Cyber Defenders. /fast # to speed up Opus model execution.

More slash commands

/insights # file://$HOME/.claude/usage-data/report.html /effort # Effort Level Controls https://www.youtube.com/watch?v=brLhhkUqcn4&t=18618s">max for Opus only. high, medium, low, auto. /remote-control # /batch # Batch Tasks & PRs /simplify # Code Review /loop # Schedule Prompts /btw # side question

5-hour window

REMEMBER: Each session is a 5-hour rolling window (at time of this writing). ???

Models reset ???

How Claude Code Works

Anthropic has issued dozens of take-down requests to “claw back” its leak. But https://github.com/oboard/claude-code-rev has restored some functionality using the bun JavaScript package manager and testing utility. bun replaces Node + npm + ts-node + jest + esbuild with a single binary.

The “Deep Dive Claude Code app” presents its analysis of the leak’s 960+ files, 50+ integrated tools, 380K+ lines of code. These 13 chapters take you from the core loop to the full engineering picture, layer by layer.

REMEMBER: The revolution Claude (and other GenAI products) is that instead of typing precise programming code, you type English sentences to describe how Claude generates programming code, in markdown format files (with “.md” at the end of file names).

Press # (for “memory mode”) instructions for Claude Code to update CLAUDE.md files which define your preferences.

Press Shift + Tab to toggle from “Standard” to “Planning Mode” where Claude expands its planning of changes to md files it will make based on your specification.

Type ULTRATHINK ahead of requests to use a Claude “Thinking mode” which applies the maximum depth of reasoning at planning. This invokes Claude to break down complex problems step by step.

WARNING: Additional planning and thinking require additional tokens to be charged.

REMEMBER: Press the Esc key to interrrupt Claude.

Within prompts, type @ to begin specifying a file’s path pointing to contents to retrieve in your request to Claude.

Screen shot in a prompt

To take a screen shot on macOS, press the usual command + Shift + 4 which changes the cursor to crosshairs. Position it on the screen and press your mouse to drag and drop to the opposite corner of the box to capture the section to your computer’s invisible clipboard. Click the Claude input field and press control + V to paste. “[Image #1]” would appear to confirm. To the right of that, type a sentence to specify what you want done based on that image.

Custom Slash Commands

VIDEO

The essence of the revolution that is AI is this diagram from the

agent-skills Github:

PROTIP: To make full use of Claude, instead of diving into coding right away (then making changes later), separate your work into several stages of a development lifecycle, using a slash command at each stage, such as these custom slash commands:

DEFINE PLAN BUILD VERIFY REVIEW SHIP ┌──────┐ ┌──────┐ ┌──────┐ ┌──────┐ ┌──────┐ ┌──────┐ │ Idea │ ───▶ │ Spec │ ───▶ │ Code │ ───▶ │ Test │ ───▶ │ QA │ ───▶ │ Go │ │Refine│ │ PRD │ │ Impl │ │Debug │ │ Gate │ │ Live │ └──────┘ └──────┘ └──────┘ └──────┘ └──────┘ └──────┘ /spec /plan /build /test /review /ship

/build has AI generate/implement all the code.

People collaborate by revising .md files that are consolidated into a “PRD” (Product Requirements Document) a short document that defines a “high level” description of what a product should do, why it exists, and what “done” looks like. It keeps product, design, engineering, and stakeholders aligned on the same goal.

AI generation can be repeated with slight variations so the AI has additional opportunities to get it right, based on the PRD specification.

The sample skills github is by Google cloud leader Addy Osmani, who has examples for several clients:

- Claude Code (recommended)

- Cursor

- Gemini CLI

- Windsurf

- OpenCode

- GitHub Copilot

- AWS Kiro IDE & CLI *

- Codex / Other Agents

The 20 skills in the repo include a “/code-simplify” step for more clarity over cleverness.

What’s really special are Pre-configured specialist personas for targeted reviews by the “security-auditor” persona.

Each skill file contains:

- Overview → What this skill does

- When to Use → Triggering conditions

- Process → Step-by-step workflow

- Rationalizations → Excuses + rebuttals

- Red Flags → Signs something’s wrong

- Verification → Evidence requirements

Addy advises “Skills should be specific (actionable steps, not vague advice), verifiable (clear exit criteria with evidence requirements), battle-tested (based on real workflows), and minimal (only what’s needed to guide the agent).”

- VIDEO: Create a folder to hold all custom commands:

md -p ~/.claude/commands - Create a .md (markdown) file for each custom command.

Claude Folders and Files

REMEMBER: Two folders are created:

Root .claude folder

- Navigate to .claude at the root (above User folders), where Claude keeps its internal folders and files:

- backups

- cache

- downloads

- file-history

- histor.jsonl file

- ide

- session-env file

- sessions

- shell-snapshots

- telemetry

User ~/.claude folder

-

Navigate to ~/.claude = $HOME/.claude = /Users/username/.claude

-

Folders copied from my templates:

- agents folder

- commands folder (merged with skills folder)

- hooks folder

- rules folder

- skills folder

https://github.com/forrestchang/andrej-karpathy-skills A single CLAUDE.md file to improve Claude Code behavior, derived from Andrej Karpathy’s observations on LLM coding pitfalls.

*.md Markdown YAML files

-

Files copied from my templates:

REMEMBER: Each .md (Markdown) file begins with “frontmatter” between “—” that is not parsed (YAML format).

--- name: explain-code.md description: Explains code with visual diagrams and analogies. Use when explaining how code works or when the user asks "how does this work?" ---- name: value should reflect the file name of the file.

-

The description consumes room in context memory because Claude uses the text to decide when to load the file.

PROTIP: Begin descriptions with an active verb such as “Fetches”.

- argument-hint (No) — Hint shown during autocomplete, e.g., [issue-number].

- disable-model-invocation (No) — true prevents Claude from auto-loading. Manual /name only.

- user-invocable (No) — false hides from / menu. Claude-only background knowledge.

- allowed-tools (No) — Tools Claude can use without asking when skill is active.

- model (No) — Model override when skill is active.

- context (No) — fork runs in an isolated subagent context.

- agent (No) — Subagent type when context: fork. Options: Explore, Plan, general-purpose, or custom.

- hooks (No) — Hooks scoped to this skill’s lifecycle.

- permissions:

PROTIP: Keep each line below 80 characters so that it’s readable on narrow panes.

Under the frontmatter are markdown content that contains instructions, examples, file references. It is loaded only when the skill is triggered.

REMEMBER: Claude Code has no memory. On every new single session, it wakes up with zero context about your project. So history and preferences must be added added as context.

CLAUDE.md file

CLAUDE.md is read to provide context at the start of every session.

-

Copy in files from ???

- CLAUDE.md referenced by

- state.md — current state of the project

- architecture.md — how everything fits together

- terraform-CLAUDE.md

- python-CLAUDE.md

- MEMORY.md

- Integrate from those who shared theirs:

- https://github.com/anthropics/courses/blob/master/tool_use/README.md from 2024

- https://github.com/citypaul/.dotfiles/blob/main/claude/.claude/CLAUDE.md

-

https://github.com/jarrodwatts/claude-code-config

- https://github.com/centminmod/my-claude-code-setup?tab=readme-ov-file#alternate-read-me-guides

- Git Worktrees (for Parallel Sessions in Claude Code via Claude Desktop apps

- https://github.com/Piebald-AI/claude-code-system-prompts?tab=readme-ov-file

-

etc. ???

- https://github.com/Piebald-AI/claude-code-system-prompts?tab=readme-ov-file#system-reminders

-

Customize System prompts using https://github.com/Piebald-AI/tweakcc

- Generate a starter CLAUDE.md as a starting point:

/init - List folders and files as a tree 3 levels down from ~/.claude/:

brew install eza eza -T -G -L 3 ~/.claude/TODO:

├── api ├── web ├── .editorconfig ├── .env.example ├── .gitignore ├── CLAUDE.md ├── README.md └── docker-compose.yml

-

Edit file CLAUDE.md, the long-term memory file.

The file guides Claude Code (claude.ai/code) when working with code in this repository.

REMEMBER: At the start of each agent session, Claude looks for a CLAUDE.MD file in each GitHub repository root, in parent directories for monorepo setups, or in your home folder for universal application across all projects. So the file must be named with uppercase “CLAUDE”, lowercase “.md” (like GitHub looks for “README.md”). Providing this context up front helps agents avoid running incorrect commands or introducing architectural or stylistic inconsistencies when implementing new features.

Each CLAUDE.md file holds markdown-formatted project-specific context that should be repeated in every prompt: Project context (basic rules), About this project, Key directories, Standards, structure, conventions, workflows, style, domain-specific terminology. Example:

-

PROTIP: Keep CLAUDE.md files to a maximum of 100–200 lines. Long files are a code smell and take up precious context. CLAUDE.md should be a routing file, not a knowledge dump.

Point to .claude/rules/*.md for detailed specs and docs/ for architecture. Otherwise it gets so long that Claude skims it and misses the important stuff.

Delete what you don’t need — deleting is easier than creating from scratch.

- “Creating the Perfect CLAUDE.md for Claude Code” by Ivan Kahl January 15, 2026

- https://medium.com/@CodeCoup/i-wasted-8-minutes-per-change-in-claudes-code-heres-what-fixed-it-4baeeef1c07f

- https://github.com/ArthurClune/claude-md-examples which is based on:

- https://github.com/modelcontextprotocol/python-sdk/blob/main/CLAUDE.md

- https://github.com/p33m5t3r/vibecoding/blob/main/conway/CLAUDE.md

- https://github.com/saaspegasus/pegasus-docs/blob/main/CLAUDE.md

- https://github.com/centminmod/my-claude-code-setup

-

Claude Co-Work - “Hand off tasks to Claude and come back to finished work.”

-

Claude Skills “turn expertise, procedures, and best practices into reusable capabilities.” To ensure output follows proven patterns (rather than guessing) for handling PowerPoint pptx files, pptx/SKILL.md is defined.

Claud.com docs on handlers for pdf, Microsoft xlsx, pptx, docx,

Settings menu and keyboard shortcuts

-

Click the Toggle sidebar (squarish) icon to collapse and expand the sidebar menu.

Click the Toggle sidebar (squarish) icon to collapse and expand the sidebar menu.REMEMBER: The “Usage” Settings menu item does not appear until you have a paid subscription.

-

PROTIP: From anywhere in Claude, press shift+command+, (comma) for Claude’s Settings at https://claude.ai/settings/general

But switch off the “AWS Extend Switch Roles” browser extension if that comes up instead.

-

PROTIP: To chat from any screen, switch to a New Chat prompt by pressing shift+command+O (the letter) and start typing. For the pop-up, press command+K or shift+command+I for incognito (for the prompt to not appear among Recents).

REMEMBER: When your cursor is within the chat box, use these keyboard shortcuts:

Projects

References:

- “Upload materials, set custom instructions, and organize conversations in one space.”

- VIDEO

REMEMBER: Unless you go incognito, every time you run Claude in a directory, a Claude Code Project is created under ~/.claude/projects. So review and remove.

-

Click the “Project” on the left menu to provide a way for Claude to remember your preferences and customize its responses to your preferences. So you don’t to repeat yourself.

PROTIP: If you work with different companies or clients, isolate each by creating a different project containing different information.

-

Click “+ New Project”

TODO: ???

Team/Enterprise subscribers can share a Project among themselves.

Tengu UI Customizations

“Tengu” is the internal codename for Claude Code CLI. The word is a transliteration of (天狗) who are supernatural beings from Japanese folklore, often depicted as skilled warriors and mischievous spirits known for their cleverness and ability to shape-shift.

When there’s no officially config option to customize your Claude Code experience (the team behind cross-platform $20/mo Piebald.ai) open-sourced a CLI tool toin tweakcc (system prompts, add custom themes, create toolsets, and UI personalizations).

As Claude “thinks”, it distracts you with one of 200 whimsical “spinner” verbs (Schlepping, Noodling, Smooshing, Reticulating, etc.) (previously thought 90+).

@DESIGN.md Design System

In your CLAUDE.md file, @DESIGN.md causes Claude to load the DESIGN.md file at the start of every session to specify visual standards of your brand.

## Design System This project uses a design system defined in @DESIGN.md. Follow strictly the rules defined in @DESIGN.md for all UI generation. Do not invent colors, fonts, or spacing values outside the design system. Match component states (hover, focus, active, disabled) to patterns in @DESIGN.md.

The example within my claude-templates repo says it’s for “Acme Corp” but was open-sourced based on Google Labs’ Stitch Design System and AI tool.

The VoltAgent/awesome-design-md repo contains 69+ ready-to-use DESIGN.md files with HTML previews (light and dark mode).

DESIGN.md combines technical tokens (exact values agents parse) with qualitative rationale (context agents use for judgment) in a single file that every major AI coding tool can read.

Between — fencing YAML front matter are the machine-readable: specs of exact colors as hex codes, typography as font families and sizes, spacing as pixel values, components as token references.

In the body, ## sections explain design philosophy, when to use which tokens, and what to avoid.

- Overview (alias: “Brand & Style”): holistic product description, brand personality, emotional tone

- Colors: palettes with semantic roles and usage guidelines

- Typography: type scale, font pairings, hierarchy rules

- Layout (alias: “Layout & Spacing”): grid models, spacing strategy, responsive behavior

- Elevation & Depth (alias: “Elevation”): shadow and depth techniques

- Shapes: border radius, corner treatments, decorative geometry

- Components: style guidance for UI atoms (buttons, cards, inputs, navigation)

- Do’s and Don’ts: guardrails and common pitfalls

From here, reference the design system in your prompts: “Build a primary button component using the design system in DESIGN.md.” Claude Code reads the tokens, applies the values, and generates code that matches your brand.

Agents

- codebase-search.md

- media-interpreter.md

- open-source-librarian.md

- tech-docs-writer.md

Hooks

- Hooks & Automation Rules

- https://dev.to/gunnargrosch/automating-your-workflow-with-claude-code-hooks-389h

Conceptually Claude hooks are like Git hooks: “run this script whenever X happens,” but for your AI coding workflow. Hooks are small, user‑defined scripts (shell commands, HTTP calls, or model prompts) that run automatically at specific points in a Claude Code session, such as:

- When Claude edits a file.

- When a session starts or ends.

- When a command is about to run or just finished.

They give deterministic control: you can enforce rules (code formatting, security checks, linting, notifications) without relying on Claude’s model to “remember” to do them.

Programming (.py Python, .sh shell files, etc.):

- check-comments.py # checks for for excessive comments.

- keyword-detector.py

- skill-reminder.sh

- todo-enforcer.sh

.gitignore

PROTIP: Within the .gitignore file are files generated by Claude:

# Exclude runtime/generated files from hooks

hooks/*.log

hooks/debug.log

hooks/todo-enforcer.config.json

Permissions

- https://www.youtube.com/watch?v=brLhhkUqcn4&t=12194s

shift+tab cycles through the permissions modes, so auto-accept edits is displayed just because currently I’m in the bypass permissions mode. There is one more permission mode plan, in which Claude Code will discuss and plan, but will not make changes to your files.

### Plan mode workflow

REMEMBER: The revolution to productivity from AI comes from “Plan Mode”, which uses AI to generate a plan rather than “vibe coding” prompts thtat generate results directly. Generating code from plans is more repeatable and enables several people to review and collaborate.

- Cycle to plan mode by pressing shift+tab twice (switching):

⏸ plan mode on (shift+tab to cycle)

- Write your goals. Build your ability to define objectives clearly.

- Let Claude break it into steps.

- Review and iterate the plan. Ask Claude improve the plan (in a loop).

-

Ask Claude to add tests to evaluate whether its solution is complete and valid.

- Switch to auto-accept edits mode.

- Setup a container during dev.

- Have Claude execute the plan end-to-end.

- Review output - Refine if needed.

Prompts

-

Type your question or command on top of “How can I help you today?”

REMEMBER, there is a cutoff for when information has been loaded in the model.

Artifacts

- Click “+ New artifacts”. Artifiacts are pre-coded small interactive apps such as Productivity Tools.

- Click “Artifacts” on the menu and under its “Inspiration” tab, try:

- click “Flashcards” and provide a CSV file.

- Click “QR code generator”.

- Click “Trivia” game.

- Click “Better than very” to find more expressive words.

- Click “CSV Data Visualizer”.

-

To create your own automations, consider the “Cowork” button at the top of the Claude app.

Cowork and Projects both require a Pro Plan subscription.

Connectors

-

Click one Category at a time to see what’s available already: Code, Communication, Data, Design, Development, Financial Services, Health, Life sciences, Productivity, Sales and Marketing.

REMEMBER: Most services at the end of the connector (such as Zapier) charge money.

- PROTIP: Instead of clicking “Download” for “Desktop” within “Claude Code environments”,

Setting up Claude Code... ✔ Claude Code successfully installed! Version: 2.1.81 Location: ~/.local/bin/claude Next: Run claude --help to get started ⚠ Setup notes: • Native installation exists but ~/.local/bin is not in your PATH. Run: echo 'export PATH="$HOME/.local/bin:$PATH"' >> ~/.bashrc && source ~/.bashrc ✅ Installation complete!

WARNING: Installing using curl would require adding to the $PATH in your ~/.zshrc or ~/.bashrc file this line:

export PATH="$HOME/.claude/bin:$PATH"RECOMMENDED: In a Terminal, install Claude Code</a>

brew info claude-code brew install claude-codeTerminal-based AI coding assistant install: 170,173 (30 days), 390,990 (90 days), 585,358 (365 days) ==> Moving App 'Claude.app' to '/Users/johndoe/Applications/Claude.app'

- Confirm app folder location:

tree ~/.claudefolders:

backups cache downloads

-

REMEMBER: The free Claude.ai plan does not include Claude Code access. Upgrade to a Claude Pro, Max, Teams, Enterprise, or Console account.\

Select the subscription level:

Claude Code can be used with your Claude subscription or billed based on API usage through your Console account. Select login method: ❯ 1. Claude account with subscription · Pro, Max, Team, or Enterprise 2. Anthropic Console account · API usage billing 3. 3rd-party platform · Amazon Bedrock, Microsoft Foundry, or Vertex AIDocumentation:

· Amazon Bedrock: https://code.claude.com/docs/en/amazon-bedrock · Microsoft Foundry: https://code.claude.com/docs/en/microsoft-foundry

· Vertex AI: https://code.claude.com/docs/en/google-vertex-ai -

Install utility a program ccusage to analyze session logs:

https://github.com/ryoppippi/ccusage/See ccusage.com/guide/session/reports

### /statusline

By default, there are two lines in the “status line” below the Claude Code prompt:

[Sonnet 4.6] | User

Context .... 0%

REMEMBER: To determine what it displays on its Status Line, Claude references JSON file:

~/.claude/statusline.sh

which can be changed by Plugins from the Claude Marketplace.

Among StatusLine Plugins making use of Claude Code’s native statusline API:

-

VIDEO: Optionally install jarrodwatts/claude-hud for the HeadsUpDisplay (HUD) plugin to add up to 4 lines below your input prompt to know if it’s still making progress or is stuck. $80/yr Masterclass

/plugin marketplace add jarrodwatts/claude-hud /plugin install claude-hud /reload-plugins # to activate /claude-hud:setup /restart Claude Code code ~/.claude/plugins/claude-hud/config.jsonThe “add” downloads to folder ~/.claude/plugins/marketplaces/claude-plugins-official

Claude references ~/.claude/plugins/claude-hud/config.json

The Updates every ~300ms.

- To remove orphaned auto-installed dependencies: …bash claude plugin prune now ```

-

validate accepts $schema, version, and description fields.

Plugins pinned by another plugin’s version constraint auto-update to the highest satisfying git tag.

Plugin error handling distinguishes between conflicting dependencies, invalid versions, and overly complex version requirements.

/status

Example:

❯ /status Version: 2.1.3 Session name: /rename to add a name Session ID: 4eb36de6-c9f2-4c22-8ad3-a8232ea6c078 cwd: /Users/gigi Auth token: none API key: /login managed key Organization: Perplexity AI Email: gigi.sayfan@perplexity.ai Model: opus (claude-opus-4-5-20251101) MCP servers: notion ✔, linear ✔, datadog ✔ Memory: user (.claude/CLAUDE.md) Setting sources: User settings, Shared project settings, Project local settings

Python Project

BLOG: Plan > Scope > Execute > Verify

Plan: “Explain how you’d solve X. No code yet.”

From uv init using prompt:

my-python-app/

├── .claude/

│ ├── settings.json # Your Python/macOS config here

│ └── claude.md # Optional: project instructions

├── src/

│ └── app.py

├── tests/

│ └── test_app.py

├── pyproject.toml

├── requirements.txt

└── README.md

Python Prompt examples:

-

“Refactor src/app.py for better error handling.”

-

“Add type hints and docstrings to src/app.py.”

-

“Run pytest and fix failures.”

-

“Lint with ruff and apply fixes.”

Settings config

- .claude/settings.json — project-level, commit to share with team.

- ~/.claude/settings.json — global user defaults (see below).

- .claude/settings.local.json — your personal project overrides (gitignored).

- managed-settings.json — enterprise-enforced, can’t be overridden.

/config

/config inside Claude Code’s interactive REPL to edit settings through a UI instead of editing JSON directly. See https://code.claude.com/docs/en/settings

❯ /config

Auto-compact true

Show tips true

Thinking mode true

Prompt suggestions true

Rewind code (checkpoints) true

Verbose output false

Terminal progress bar true

Default permission mode Accept edits

Respect .gitignore in file picker true

Auto-update channel latest

Theme Dark mode

Notifications Auto

Output style default

Language Default (English)

Editor mode normal

Model opus

respectGitignore: true keeps file picking from surfacing ignored files by default.

- Limit use of “latest” which means beta:

"autoUpdatesChannel": "stable", - Lock Claude’s response language:

"preferredLanguage": "english", - Set default to less expensive model than “opus” with medium usage vs. high:

"model": "claude-sonnet-4-6", "effortLevel": "medium",~/.claude/settings.json config

Configuration choices are stored by Claude in its file ~/.claude/settings.json

REMEMBER: Indent two spaces per level.

- No comma after last item in a list.

- “allow” pre-approves tools so you’re not prompted every time

- “deny” hard-blocks sensitive reads (env files, keys) and destructive commands

- true and false are not encased between quote marks.

- Colons (:) separate each folder specification.

global user defaults:

- At the top, for autocomplete in editors like VS Code:

} "$schema": "https://json-schema.org/claude-code-settings.json", - Set basis for rules by what enviornment (vs prod):

"env": { "NODE_ENV": "development", "GIT_MAIN_BRANCH": "main", "PYTHONPATH": "./src:./tests" "REPOSITORY_NAME": "data-ai-tickets-template", "DATABASE": "ANALYTICS", "WAREHOUSE": "DATA_ANALYSIS", "SCHEMA": "REPORTING", "DATABRICKS_PROFILE_PROD": "production", "DATABRICKS_PROFILE_DEV": "development" }, - Set shell program (not zsh) for compatibility:

"defaultShell": "bash", - For stronger command isolation, especially in higher-risk environments.:

"sandbox": { "enabled": true, "autoAllowBashIfSandboxed": true, "network": { "allowedDomains": [ "pypi.org", "files.pythonhosted.org", "github.com" ] } }, - Enable attribution:

"respectGitignore": true, "attribution": { "commits": true, "pullRequests": true } - Deny access (like .gitignore for Claude) so tokens are not wasted reading what Claude should not:

"permissions" : { "deny": [ "Read(node_modules/**)", "Read(dist/**)", "Read(.next/**)", "Read(coverage/**)", "Read(*.lock)", "Read(**/.DS_Store)", "Read(**/__pycache__/**)", "Read(**/.mypy_cache/**)", - Deny access to secrets - use calls thru secrets manager instead:

"permissions" : { "deny": [ "Read(.env)", "Read(.env.*)", "Read(**/.env)", "Read(**/.env.*)", "Read(./.env)", "Read(./.env.*)", "Read(./secrets/**)" "Read(credentials/**)", "Read(**/*.key)", "Read(**/*.pem)", ] - Deny mass destructive operations:

"permissions" : { "deny": [ "Bash(sudo:*)", "Bash(su:*)", "Bash(rm -rf *)" "Bash(curl *)", "Bash(wget *)", "Delete", "Bash(git push --force:*)", "Bash(git push -f:*)", "Bash(git reset --hard:*)", ] - Allow to MCP servers:

"permissions" : { "allow": [ "mcp__playwright",Notice the two underlines in the name.

- Under permissions -> allow to not need user confirmation:

"Read", "Write(./projects/**)", "Write(./documentation/**)", "Write(./videos/**)", "Bash(brew install *)", "Bash(brew upgrade *)", "Bash(cat *)", "Bash(echo *)", "Bash(pwd)", "Bash(tree:*)", "Bash(git add *)", "Bash(git branch:*)", "Bash(git commit *)", "Bash(git diff *)", "Bash(git show:*)", "Bash(git status)", "Bash(git log *)", "Bash(git push)", "Bash(ls *)", "Bash(npm run *)", "Bash(npx *)", "Bash(poetry install)", "Bash(poetry run *)", "Bash(python -m *)", "Read(**/requirements*.txt)", "Read(**/pyproject.toml)" "Bash(uv *)", "Bash(ruff check:*)" "Read(**/*.py)", "Glob", "Grep" ] - Useful for debugging if hooks are misbehaving:

], "disableHooks": true, - When running a shell command, to prevent silent truncation and wasted retries, set a higher number than the default 30-50,000:

"BASH_MAX_OUTPUT_LENGTH": "150000", - In reality, output quality degrades before the default, so trigger before the default 83%:

"autocompact_percentage_override": 75, - Turn off Claude’s distracting spinner text:

"spinnerTipsEnabled": false, - Highlight:

"syntaxHighlightingDisabled": false, - Define extent of thinking output:

"showThinkingSummaries": true, - Adjust frequency of cleanup instead of default 30 days:

"cleanupPeriodDays": 20, - Disable writing chat history to disk if you want privacy:

"sessionPersistenceDays": 0, - Force push of feature branch instead of main branch: VIDEO from Kyle Chalmers’ Github:

"hooks": { "PreToolUse": [ { "matcher": "Bash(git commit:*)", "hooks": [ { "type": "command", "command": "bash -c 'if [ $(git branch --show-current) = \"main\" ]; then echo \"ERROR: Cannot commit to main branch. Create a feature branch first.\" && exit 1; fi'" } ] } ] }

References:

/doctor or CLI claude doctor

Token /context usage

Claude’s context window is 200K, meaning it can ingest 200K+ tokens (about 500 pages of text or more) when using a paid Claude plan. The Claude API can ingest 1M tokens when using Claude Opus 4.6 or Sonnet 4.6.

PROTIP: Take action when token usage is above 50%. See Rewind Mode (Escape x2)

The first line in the example above:” 51k tokens (26%)” is what is currently used. Users on Claude Code with a Max, Team, or Enterprise plan, Claude Opus 4.6 have a 1M token context window.

REMEMBER: The Autocompact Buffer: 45k tokens (22.5%) is reserved for autocompaction. When your conversation approaches the context window limit, Claude summarizes earlier messages to make room for new content. Claude Code does this automatically when the context window fills up, but here’s the thing - automatic compaction might keep less important stuff and throw away useful insights. But that takes time and require work space. The context window limit applies to input + output combined. When autocompaction triggers, the model needs room to generate the summary. Without reserved space, a full context would leave no room for output. So right off the bat, you only have about half the context window for your actual conversation.

System Overhead: The system prompt and tools reserve almost 20k tokens (~10%).

The more MCP servers are used, the more “MCP Tools” tokens are used. Each tool within an MCP server consumes token before it even starts. Each of several tools are usually a part of each MCP server. For example, Notion has a tool for

* create-pages

* create-comment

* update-page

* update-database

/cost tokens spent

❯ /cost

⎿ Total cost: $2.69

Total duration (API): 5m 12s

Total duration (wall): 9h 39m 12s

Total code changes: 10 lines added, 1 line removed

Usage by model:

claude-haiku: 42.1k input, 790 output, 0 cache read, 11.9k cache write ($0.0609)

claude-opus-4-5: 3.4k input, 10.7k output, 1.7m cache read, 235.3k cache write, 1 web search ($2.63). x ```

## /loop

The /loop command parses natural language specifications into three parameters of a CronCreate call , which is not just a repetitiive “Ralph loop”. It can also schedule a task that fires based on a timer, in the current Claude Code session. Close the terminal, exit Claude, or lose your connection, and all scheduled tasks vanish.

{

"cron": "*/10 * * * *",

"prompt": "Check the CI status on PR #42 and summarize any failures",

"recurring": true

}

Skills

VIDEO: Skills reduce the pain from copy-and-paste prompting. Skills compound.

Skills collected from others, such as git@github.com:jarrodwatts/claude-code-config.git

- planning-with-files

- rigorous-coding

- web-design-guidelines

- react-useeffect

- vercel-react-best-practices

The entry point for each skill is a SKILL.md file in its own directory. So keep primary instructions in SKILL.md concise while still giving Claude access to rich supporting material when it needs it.

skill-name/

├── SKILL.md # Main instructions (required)

├── assets/ # Spec: templates, resources

├── examples/

│ └── sample-output.md # What good output looks like

├── reference.md # Detailed docs (loaded on demand)

├── references/ # Spec: documentation

│ └── api-spec.md # Detailed specs Claude reads when needed

├── scripts/ # Spec: exectable code

│ └── validate.sh # Executable scripts

└── templates/

└── output.md # Template Claude fills in

REMEMBER: Skill folders under ~/.claude/skill/… are usable by all projects.

CAUTION: Description of what the skill does and when to use it must be only one line. Max 1024 characters.

VIDEO: Agent Skills</a> makes use of OpenAI’s “open agent skills standard” (released on December 18, 2025), written in OpenAI Codex CLI, IDE Extension, Codex app. Google Gemini and DeepMind adopted it too. They’s on skillsmp.com marketplace

https://thenewstack.io/agent-skills-anthropics-next-bid-to-define-ai-standards/ Open Agent Skills spec is at: https://agentskills.io/home

https://www.atcyrus.com/skills Marketplace

Rules

In the rules folder, from git@github.com:jarrodwatts/claude-code-config.git

- comments.md

- forge.md

- testing.md

- typescript.md

https://www.gitguardian.com/files/secrets-management-maturity-model

Vulnerability Scanning

Because Claude understands context and logic, it can catche vulnerabilities that rule-based tools miss — like flawed business logic, insecure flows, or misuse of libraries.

| Category | Examples |

|---|---|

| Injection | SQL injection, command injection, LDAP injection |

| Secrets | Hardcoded passwords, API keys, tokens |

| Crypto | Weak hashing (MD5/SHA1), insecure random |

| Auth | Broken auth, missing rate limiting |

| Input validation | Missing sanitization, path traversal |

| Dependencies | Outdated/vulnerable imports |

| Deserialization | Unsafe pickle, yaml.load() |

| SSRF / XSS | In web frameworks like Flask/Django |

Create an iPhone app

VIDEO: Chris Raroque runs Claude Code Opus inside a Warp client referencing a [paid] mobbin.com design template. Voice dictates changes. Breaks down generation section by section. No hand edits.

Anthropic provides free tutorials at https://anthropic.skilljar.com/

Claude Partner Network

https://claude.com/partners

“Anthropic invests $100 million into the Claude Partner Network” (announced Mar 12, 2026) mentions “technical” Claude Certified Architect (CCA) Foundations certification.

#CAExamPrep

- https://anthropic.skilljar.com/claude-certified-architect-foundations-access-request

“A significant proportion of our $100 million investment will go directly to our partners as direct support for training and sales enablement, and for market development (including work to make customer deployments successful) and co-marketing for joint campaigns and events. “

The Partner Portal at https://partnerportal.anthropic.com/s/login/ provides Academy training materials, sales playbooks used by our own go-to-market team, and other co-marketing documentation.

At the Services Partner Directory, enterprise buyers can find firms with Claude implementation experience.

Partners get priority access to new certifications as they roll out.

Additional certifications for sellers, architects, and developers.

Certifications

-

Use your personal email to sign up for their newsletter.

-

Use your personal email to sign In to https://anthropic.skilljar.com

Claude Certified Architect (CCA), Foundations

Exam Domains from Anthropic’s Exam Guide.pdf:

- 27% Agentic Architecture & Orchestration - how agents loop, coordinate with subagents, and enforce rules with hooks

vs prompts. STARTER

- The Agentic Loop

- Hub-and-Spoke Architecture

- Prompts vs. Hooks

- Anti-patterns: natural language parsing to determine loop termination; arbitrary iteration caps as the primary stopping mechanism; checking for assistant text as a completion indicator.

- 18% Tool Design & MCP Integration - how Claude connects to external systems and how tool descriptions

determine routing.

- Tool Descriptions

- MCP Scoping

- Tool overload

- 20% Claude Code Configuration & Workflows - skills, commands, plan mode, and CI/CD.

- Configuration Hierarchy

- When to Use What

- CI/CD Integration

- 20% Prompt Engineering & Structured Output - structured output with JSON schemas, and validation

loops.

- Few-Shot Advantage

- Guaranteed structured output with JSON schemas

- Validation Loop

- 15% Context Management & Reliability

- Context Window Problem (‘lost in the middle’ effect)

- When to Escalate (escalation patterns)

- Error Propagation

- domain5-starter.md

- Building Agents with the Claude Agent SDK covers context management, error propagation, and escalation design

- Agent SDK session docs for resumption, fork_session, /compact

- Everything Claude Code repo by Affaan Mustafa for battle-tested context management patterns, scratchpad files, and strategic compaction. Tools and Tips.

The community confirms is the exam’s focus areas: fallback loop design, Batch API cost optimization, JSON schema structuring to prevent hallucinations, and MCP tool orchestration.

IBM AI Engineering (Coursera) ML/DL concepts and model deployment Conceptual + hands-on Cloud-agnostic

Anthropic Academy is at https://www.anthropic.com/learn

https://anthropic.skilljar.com/claude-certified-architect-foundations-access-request

References:

- @hoeem’s X post “I want to become a Claude architect (full course)” provides a set of prompts.

- https://dev.to/mcrolly/inside-anthropics-claude-certified-architect-program-what-it-tests-and-who-should-pursue-it-1dk6

- https://github.com/BayramAnnakov/claude-reflect - A self-learning system for Claude Code that captures corrections, positive feedback, and preferences — then syncs them to CLAUDE.md and AGENTS.md.

Models

Claude Model Family:

| Claude Opus | Claude Sonnet | Claude Haiku | Mythos | |

|---|---|---|---|---|

| Description | Highest level of intelligence | Balance of quality, speed, cost | Most cost-efficient and latency-optimized model | |

| capabilities (Best used for) |

advanced reasoning | Common coding tasks | Quick code completions and suggestions | |

| Cost: | Highest | Medium | Lowest | |

| Input/Output $/MTok | $5/$25 | $3/$15 | $1/$5 | |

| Prompt caching Read/Write $/MTok | $0.50/$6.25 | $0.30/$3.75 | $0.10/$1.25 | |

| max_input_tokens (Context window) | 1M tokens | 1M tokens | 200k tokens | |

| max_tokens (Max output) | 128k tokens | 64k tokens | 64k tokens | |

| Tokens/min Input & Output | 30K/8K | 30K/8K | 50K/10K | |

| Comparative Latency: | Moderate | Fast | Fastest | |

| Supports Reasoning & Adaptive Thinking |

Yes | Yes | No! |

REMEMBER: Each model used has a different ID and version on each cloud: See DOCS: API codes for each Claude Model version list or GET https://api.anthropic.com/v1/models

On AWS, the full model_id = “us.anthropic.claude-3-7-sonnet-20250219-v1:0”

| Feature | Claude Opus 4.6 | Claude Sonnet 4.6 | Claude Haiku 4.5 |

|---|---|---|---|

| Claude API ID | claude-opus-4-6 | claude-sonnet-4-6 | claude-haiku-4-5-20251001 |

| Claude API alias used by API calls | claude-opus-4-6 | claude-sonnet-4-6 | claude-haiku-4-5 |

| GCP Vertex AI ID | claude-opus-4-6 | claude-sonnet-4-6 | claude-haiku-4-5@20251001 |

| AWS Bedrock ID | anthropic.claude-opus-4-6-v1 | anthropic.claude-sonnet-4-6 | anthropic.claude-haiku-4-5-20251001-v1:0 |

| Reliable knowledge cutoff: | - | - | February 2025 |

| Training data cutoff: | - | - | July 2025 |

TODO: Microsoft Foundry?

REMEMBER: The Reliable knowledge cutoff is the date through which knowledge is most extensive and reliable.

Training Data Cutoff is the broader range of data used.

Advanced reasoning:

- Advanced software development, especially large-scale architecting

- Long-running tasks that require sustained focus

- Strategic planning with multi-step problem solving

Common coding tasks:

- Document creation and editing

- Content marketing and copywriting

- Data analysis and visualization projects

- Image analysis

- Process automation

Quick code completions and suggestions:

- Content moderation and filtering

- Data extrction and categorization

- Language translation

- Q&A Systems and knowledge retrieval

- Most high-volume, straightforward text processing tasks

Chat API call using Claude Opus

- Based on https://platform.claude.com/docs/en/get-started

- Get your API key from the Claude Console API keys page.

- Save the value in your Password Manager.

- In a Terminal app, set environment variable:

export ANTHROPIC_API_KEY='sk...your-api-key-here' -

For API usage, buy $5 of credits from https://platform.claude.com/settings/billinghttps://platform.claude.com/settings/keys

- Run the simple-msg.py from https://github.com/bomonike/claude-templates… ‘'’python import anthropic client = anthropic.Anthropic() message = client.messages.create( model=”claude-sonnet-4-6”, max_tokens=1024, messages=[{ “role”: “user”, “content”: “Hello, Claude” }] ) print(message.content[0].text) ```

-

Run the curl-model-info.sh from https://github.com/bomonike/claude-templates…

curl https://api.anthropic.com/v1/messages \ -H "Content-Type: application/json" \ -H "x-api-key: $ANTHROPIC_API_KEY" \ -H "anthropic-version: 2023-06-01" \ -d '{ "model": "claude-opus-4-6", "max_tokens": 1000, "messages": [ { "role": "user", "content": "What are the capabilities of Claude Opus 4.5 and its Reliable knowledge cutoff date and Training data cutoff dates?" } ] }'An example of the response: ???

{ "id": "msg_01HCDu5LRGeP2o7s2xGmxyx8", "type": "message", "role": "assistant", "content": [ { "type": "text", "text": "Here are some effective search strategies to find the latest renewable energy developments:\n\n## Search Terms to Use:\n- \"renewable energy news 2024\"\n- \"clean energy breakthrough\"\n- \"solar/wind/battery technology advances\"\n- \"green energy innovations\"\n- \"climate tech developments\"\n- \"energy storage solutions\"\n\n## Best Sources to Check:\n\n**News & Industry Sites:**\n- Renewable Energy World\n- GreenTech Media (now Wood Mackenzie)\n- Energy Storage News\n- CleanTechnica\n- PV Magazine (for solar)\n- WindPower Engineering & Development..." } ], "model": "claude-opus-4-6", "stop_reason": "end_turn", "usage": { "input_tokens": 21, "output_tokens": 305 } } - Review Claude token usage at https://platform.claude.com/usage

Multi-Provider

The “AI-6 framework” at February 2026 Packt BOOK: “Design Multi-Agent AI Systems Using MCP and A2A” (on OReilly.com) by Gigi Sayfan referencing his book GitHub repo https://github.com/Sayfan-AI/ai-six.

AI Fluency Class

https://www.anthropic.com/learn/claude-for-you

AI Fluency 11-video playlist on YouTube

01Introduction to AI Fluency

02The AI Fluency Framework

03Deep Dive 1: What is Generative AI?

04Delegation

05Applying Delegation

06Description

07Deep Dive 2: Effective Prompting Techniques

08Discernment

09The Description-Discernment Loop

010Diligence

Text Chat using Claude API

https://platform.claude.com/docs/en/get-started

curl https://api.anthropic.com/v1/messages \

-H "Content-Type: application/json" \

-H "x-api-key: $ANTHROPIC_API_KEY" \

-H "anthropic-version: 2023-06-01" \

-d '{

"model": "claude-opus-4-6",

"max_tokens": 1000,

"messages": [

{

"role": "user",

"content": "What should I search for to find the latest developments in renewable energy?"

}

]

}'

Making a request

Multi-Turn conversations work by you maintaining your own chat history.

- REMEMBER: Alternate between message roles User and Assistant (the AI).

Chatbot

PROTIP: By default, Chat returns message with code between backticks so its explanation text can be added. To retrieve just the code returned with “stop sequences”:

import json

# Parse as JSON to validate and format